Trevor Paglen practices an art of explication. Anyone who has attended his last several exhibitions knows this because his wall texts are so extensive. Indeed, without them the images are incomprehensible. But the word explication needs explicating. What I mean is that Paglen’s art, if it is art, is devoted to bringing into view things that are taken for granted, hidden, or poorly understood, all in a political sense. The explications are fundamentally abstract, and the images are illustrations, exempla, or by-products. This makes for a chilly gallery experience, but this exhibition was worth bundling up for. The images and large-scale video projection took us into a world of artificial intelligence machines as they recognized, processed, and learned from images. They do this at the behest of programmers, who are themselves paid and directed by institutions and commercial enterprises – and in several cases, by an artist.

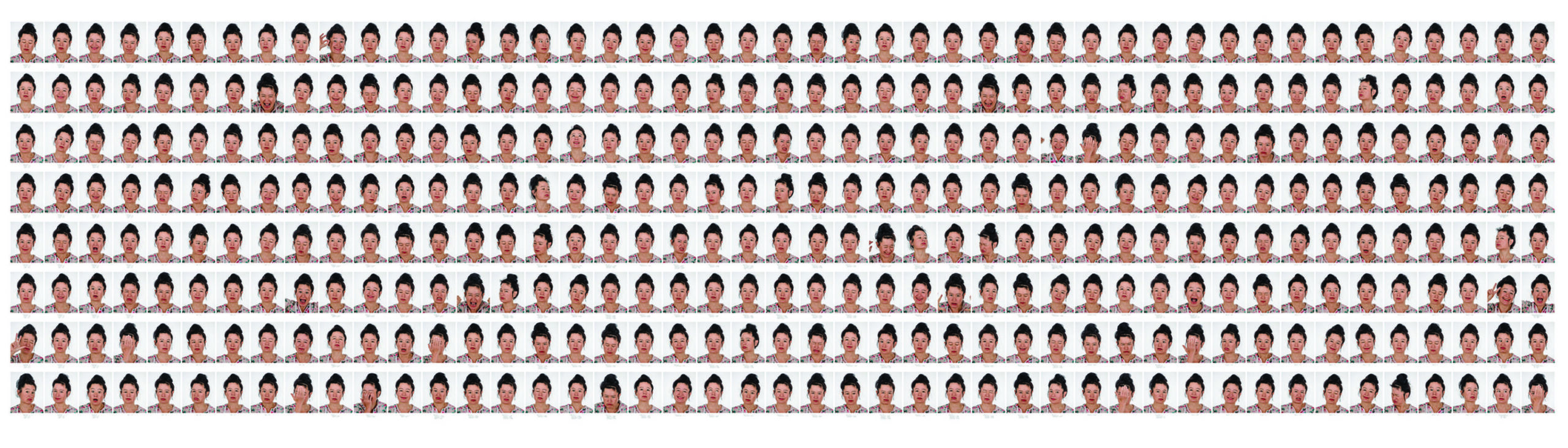

Of course, the programs don’t actually “see” anything. Images are mere data, parsed and ordered to educe certain patterns, and the outputs are for human users. For example, one wall of the gallery displayed a grid of hundreds of images of the German media theorist and artist Hito Steyrl making a variety of facial expressions. The AI was programmed to identify not only gender but also sexual orientation, emotion, and other more fugitive responses, to qualities like “saltiness.” Text under each image indicated salient – often hilarious – machine-derived descriptors. Paglen’s point seems to be that the reductive, culturally biased categories programmed into the AI yield equally reductive identifications. Garbage in, garbage out, as the programmers like to say. Behind this lies the darker question of who would benefit from such profiling.

The large-scale video displayed expanding and contracting grids of images as an AI program grew its capacity to recognize various kinds of human actions, from pushing a shopping cart to smiling. It also showed how the AI program broke down images to study them. The visual analogy to Eadweard Muybridge’s frame-by-frame studies of human locomotion was obvious, but the sense was that of seeing into a mechanical subconscious as it devoured reality and reordered it. Somewhere during this mind-assaulting presentation, we left the didactic, political domain behind and entered the visually unknown, the realm of our own inchoate emotions.

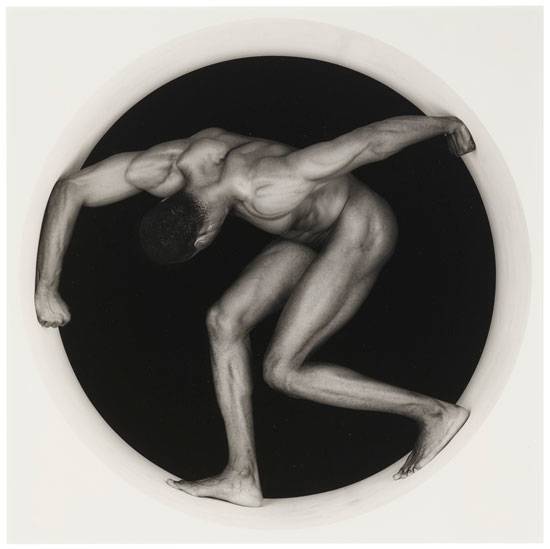

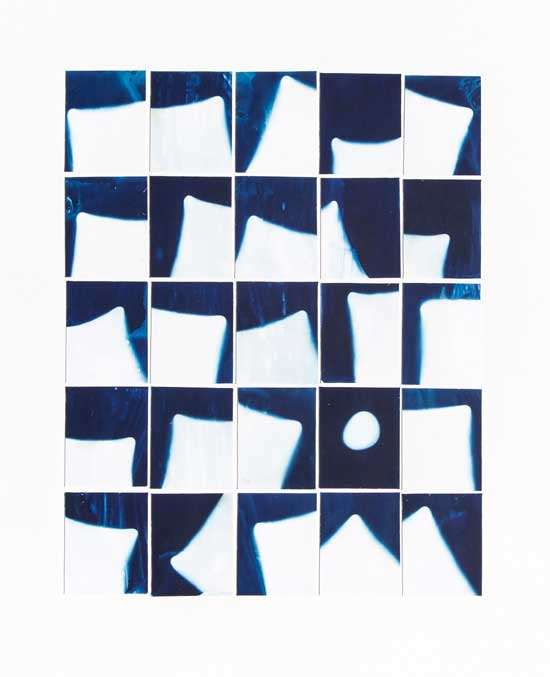

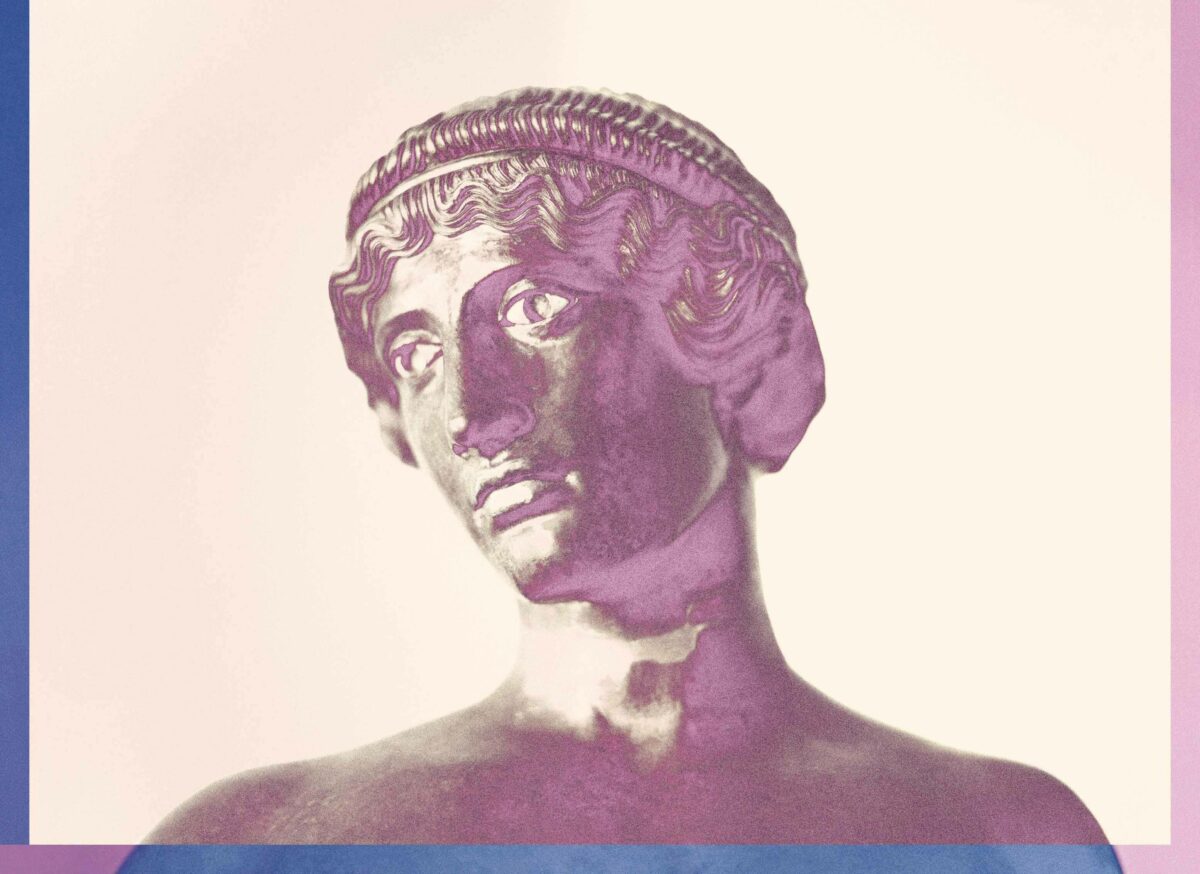

Which was perfect preparation for the most ambitious room of the exhibition. The blurry, quasi-abstract photographs on the walls represented outputs selected by Paglen from a strange variety of software learning duels. Paglen and his team programmed pairs of AI machines, one to recognize and learn Paglen-devised image sets, the other to try to deceive the first – and learn from its own mistakes. Here human beings were completely shut out of the communication, around such categories as images representing aspects of Sigmund Freud’s Interpretation of Dreams and images representing American predators – everything from Venus flytraps to drones. The AI parsed these outlandish categories without comment, of course, and grew ever more adept at recognizing the variations on the unvisualizable, with Paglen intervening to pluck AI “mistakes” from the exchanges and put them on the wall as “adversarially evolved hallucinations.” The images were almost familiar, and uncanny for being that.

Long ago the sci-fi writer Philip K. Dick asked whether androids dream of electric sheep. Paglen has given us a glimpse of an answer in these monsters from the AI id.